In the fast-evolving world of artificial intelligence, multimodal AI models are gaining traction across industries. These models integrate data types like text, speech, and images to enhance decision-making and operational efficiency.

Multimodal AI models are AI systems that combine and analyze multiple data types simultaneously, such as text, images, and speech. This powerful approach helps businesses achieve more accurate insights and streamlined operations but also presents challenges in integration and scalability.

Curious about how multimodal AI models can revolutionize your business? Continue reading to discover real-world examples, benefits, and how you can harness this technology for your industry.

Get smarter insights and better results with Convin’s multimodal AI!

Understanding Multimodal AI Models

Multimodal AI models are designed to simultaneously process and analyze multiple data types, such as text, audio, images, and even video. By combining these different data inputs, these models can make more informed decisions and generate more accurate outcomes.

What is Multimodal AI?

Multimodal AI models use a combination of machine learning, natural language processing (NLP), and computer vision (CV) to interpret and understand different types of data at once. This allows them to perform tasks that require a more nuanced understanding of the data.

- Core Technologies in Multimodal AI: These models leverage several technologies, such as NLP to process text data, ML to learn from patterns in data, and CV to understand and interpret images and visual data.

- Benefits of Multimodal AI

- Improved accuracy in predictions by considering diverse data points.

- The ability to handle more complex, real-world tasks effectively.

- Increased operational efficiency, as multimodal AI models can automate various processes simultaneously.

What is Generative AI?

Generative AI refers to a type of AI that is capable of generating new data, content, or solutions based on patterns and examples it has learned from existing data. Unlike traditional AI models, which typically analyze and predict based on input data, generative AI creates new, original outputs.

Generative AI creates images, text, and music, offering value in entertainment, marketing, and design. Multimodal generative AI enhances content creation, personalized marketing, and virtual assistants with creative, unexpected results.

Understanding multimodal AI models gives us a solid foundation for exploring real-world examples. These examples highlight how technology is already significantly impacting industries.

Reimagine customer experience with Convin’s multimodal AI!

Example of Multimodal AI Models

The applications of multimodal AI models are vast, stretching across many industries, from customer service to healthcare. By combining different data types, these models can solve complex problems and offer more precise solutions.

Let’s explore a few real-world examples of multimodal AI models in action.

- Healthcare Applications

- In healthcare, multimodal AI models combine patient records, medical imaging, and diagnostic texts to improve the accuracy and speed of diagnoses.

- These models can analyze complex medical data, such as X-rays, CT scans, and patient histories, to identify patterns that may go unnoticed by human professionals.

- Customer Service

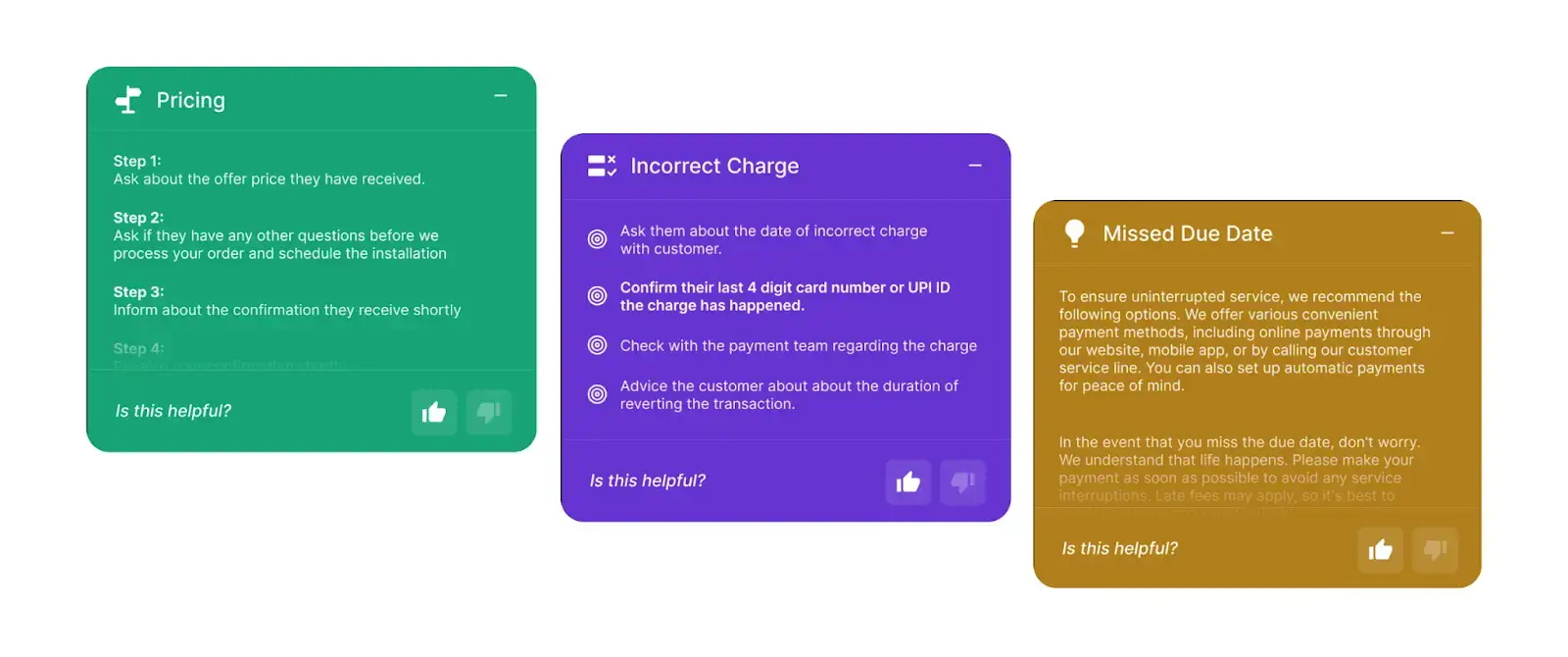

- In contact centers, multimodal AI models transform customer service by integrating voice recognition, speech-to-text, and sentiment analysis.

- This enables AI to interpret emotions, understand queries, and deliver personalized, real-time responses, enhancing customer experience.

- E-commerce

- Online retailers use multimodal AI models to analyze product images, customer reviews, and transaction data to provide tailored product recommendations.

- These models can automatically detect patterns in customer preferences and predict which products are likely to be of interest, improving both customer satisfaction and sales.

These examples demonstrate how multimodal AI models are applied in practice. Their ability to combine multiple data streams enhances their accuracy and effectiveness. Let’s now look at how multimodal AI models are impacting logistics.

Maximize operational efficiency with Convin’s multimodal AI! Schedule demo today.

This blog is just the start.

Unlock the power of Convin’s AI with a live demo.

Multimodal AI Models in Logistics

The logistics industry, with its complex operations and need for optimization, is another area benefiting from multimodal AI models. These models help streamline operations, reduce costs, and improve efficiency by simultaneously processing and analyzing diverse data sources.

Let’s explore how multimodal AI models are transforming logistics.

- Supply Chain Optimization

- Multimodal AI models in logistics can analyze data from various sources, such as GPS, weather reports, and sensor data.

- This AI optimizes delivery routes, predicts delays, and adjusts in real time based on conditions.

- Predictive Maintenance

- By combining machine sensor data with maintenance logs, multimodal AI models can predict when a piece of equipment will likely fail.

- This proactive approach to maintenance helps logistics companies avoid costly downtime and ensures that their operations run smoothly.

- Route Optimization

- Multimodal AI models can integrate real-time traffic, weather, and GPS data to optimize delivery routes.

- These models dynamically adjust routes based on changing conditions, reducing delivery times, cutting fuel costs, and improving customer satisfaction.

- Multimodal LLM

- Multimodal LLM (Logistics Lifecycle Management) optimizes the flow of goods by incorporating data from various sources.

- It enables the tracking and managing shipments, ensuring that logistics processes are fully integrated and efficient.

Using multimodal AI models in logistics improves operational efficiency and enables companies to make smarter, data-driven decisions. Let’s look at why these models are so important in today’s rapidly evolving business environment.

Elevate your contact center’s efficiency with Convin’s multimodal AI!

Importance of Multimodal AI Models

The importance of multimodal AI models is evident in their ability to solve complex problems, enhance customer experiences, and increase efficiency across industries. As more companies adopt these models, they quickly become a cornerstone of digital transformation.

Let’s explore why multimodal AI models are so important.

- Enhanced Customer Experience

- Multimodal AI models can process data from text, voice, and images to create more personalized and efficient customer experiences.

- These models analyze voice tone, sentiment, and text in contact centers to deliver real-time, context-aware responses, boosting engagement and satisfaction.

- Operational Efficiency

- Multimodal AI automates many tasks that would otherwise require significant manual intervention.

- From customer support to inventory management, these models can handle multiple functions simultaneously, reducing human error and improving overall productivity.

- Data-Driven Insights

- Analyzing multiple data types in real time gives businesses more comprehensive insights.

- With multimodal AI models, companies can uncover hidden patterns in customer behavior, predict future trends, and make more informed decisions.

With these compelling benefits, it’s clear that multimodal AI models play a crucial role in enhancing business processes and driving innovation. Let’s now look at how multimodal AI models will continue to evolve.

Drive real-time smart decisions with Convin’s multimodal AI!

The Future of Multimodal AI Models

The future of multimodal AI models is promising. The evolution of multimodal AI will open new opportunities for industries to improve efficiency, create innovative solutions, and provide more personalized services.

- Innovations on the Horizon: Future multimodal AI models will integrate more data types. As more industries adopt AI, these models will become more sophisticated, offering deeper insights and more robust solutions.

- Broader Industry Applications: The applications of multimodal AI models will expand beyond current uses. Industries like finance, education, and legal services will begin leveraging multimodal AI to streamline operations and gain better insights into their data.

- Increased Automation: Automation will continue to be a major driver of multimodal AI models. As the models evolve, their ability to automate complex workflows will grow, further reducing the need for manual labor and improving operational efficiency.

The future of multimodal AI models holds tremendous potential for innovation across industries. Companies that embrace this technology will be at the forefront of digital transformation and operational excellence.

Multimodal AI Models Summed Up

Whether in healthcare, customer service, or logistics, processing diverse data streams allows businesses to make smarter decisions, improve efficiency, and enhance customer experiences.

As the technology continues to evolve, the potential of multimodal AI models will only expand, offering even greater capabilities and benefits. Businesses that leverage multimodal AI today will be well-positioned to thrive in an increasingly competitive and data-driven world.

Enhance agent productivity with Convin’s multimodal AI—schedule your demo now!

FAQs

1. What are the 5 multimodal types?

The five types of multimodal data include text, speech, images, video, and sensor data. These modalities are integrated with multimodal AI models to process diverse information, enabling more accurate predictions and decision-making.

2. What is an example of a multimodal image?

An example of a multimodal image is an image with embedded text or annotations, where AI can interpret visual elements and textual descriptions. For instance, an image of a product with a caption that describes its features allows AI to analyze both the image and text for insights.

3. What is an example of a multimodal art?

Multimodal art combines various media like sound, visuals, and interactive elements. An example would be a digital artwork that incorporates images, videos, and music, which viewers can interact with, engaging multiple senses and creating a dynamic experience.

4. What is an example of a multimodal live?

An example of a multimodal live scenario is a live video stream that includes real-time text captions, facial recognition, and live audience interaction. It combines multiple data types—visuals, text, and audio—to enhance the experience for viewers and participants.

.avif)

.avif)